Bonus abuse tactics fraud teams can’t catch (and why AI must take over)

The bonus abusers have industrialised. They run syndicate operations with dedicated roles, shared intelligence networks, and automation tools purpose-built to drain promotional budgets.

Most operators are still fighting them with spreadsheets and rule sets designed a decade ago. That gap is where your marketing ROI is disappearing.

Fraud teams can still catch the obvious attempts. But today’s bonus abuse tactics are designed to look exactly like legitimate play, and they are succeeding. This article breaks down how modern bonus abuse actually works, why traditional detection methods are losing the battle, and why AI-driven detection is the only credible response.

The evolution of bonus abuse

In the early days of iGaming, bonus abuse was relatively unsophisticated. A single player might register multiple accounts with different email addresses or repeatedly target welcome offers until an operator blocked them. Risk teams could usually identify these attempts with simple checks and manual reviews.

Today, bonus abuse has evolved into a professionalised practice, fueled by easily accessible tools, forums where fraudsters exchange strategies, and the growing value of promotional campaigns. What was once a fringe activity has become a stable revenue drain.

Fraudsters no longer act alone. They work in groups, coordinate activity across brands, and use data-driven methods to stay under the radar. Just as importantly, they’ve learned how to mimic the behaviours of legitimate players, making their activity blend seamlessly into normal customer patterns.

The result is a challenge that has moved far beyond what traditional controls were designed to handle. Bonus abuse is no longer a compliance or risk issue. It has become a material threat to operator margins.

Real-world bonus abuse tactics operators need to know

Modern bonus abuse is not about a single fraudster slipping through the cracks. It has moved on to organised, persistent methods designed to bypass detection and quietly drain promotional budgets.

Some of the most common and costly tactics you should know about include:

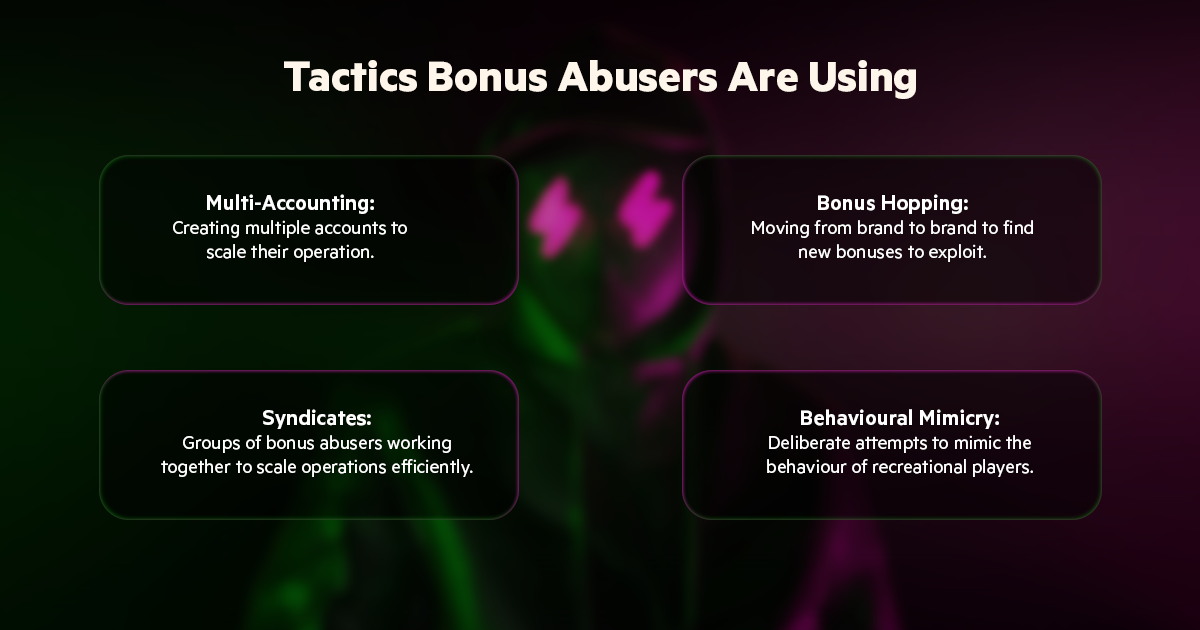

Multi-accounting

Fraudsters create multiple accounts to repeatedly claim bonuses, often at a scale that far exceeds what manual reviews can handle.

Disposable emails, fake personal details, and stolen identities are used to pass KYC checks. In some cases, fraudsters employ device farms or VPNs to disguise their digital fingerprints, making dozens of accounts appear to come from different households.

To a fraud team, each account can look legitimate in isolation, but the collective activity represents significant financial losses.

Bonus hopping

Rather than sticking with one operator, abusers move from brand to brand in search of new promotions. They track offers across the market, pounce on high-value campaigns, and cycle through bonuses in a way that resembles genuine player churn.

From an operator’s perspective, this behaviour is difficult to distinguish from standard customer acquisition patterns, but the revenue gained from these players is almost never sustainable.

Syndicates

What makes today’s bonus abuse especially damaging is the collaborative element. Syndicates form groups where responsibilities are divided: one person acquires or creates identities, another manages account registrations, while another handles gameplay and withdrawals.

This division of labour allows them to efficiently scale operations, moving large sums across multiple accounts without attracting immediate suspicion.

Syndicates are also adept at sharing intelligence, identifying new loopholes, weakly enforced KYC processes, or markets where oversight is lighter, making them hard to contain once they spot an opening.

Behavioural mimicry

Perhaps the most sophisticated tactic is the deliberate attempt to imitate the behaviours of genuine recreational players. Abusers stagger deposits, vary bet sizes, and even create deliberate losses to avoid appearing ‘too lucky’.

Some will maintain accounts over long periods, mixing normal-looking play with targeted bonus exploitation. To a human reviewer, this activity looks authentic. But at scale, these patterns form clusters that are only visible through advanced data analysis.

The financial cost is real but the subtler damage is strategic: every dollar that reaches an abuser is a dollar that didn’t build loyalty with a genuine player.

Multiply that across a quarter’s worth of campaigns, and what looks like a healthy acquisition number starts to look like a subsidised fraud operation.

Why human-only detection can’t stop modern bonus abuse

Rulebooks, spreadsheets and manual reviews were never designed for this level of complexity. They work well for clear-cut cases, but modern abuse hides in the shadows: subtle, distributed behaviours stretched across thousands of accounts, brands and geographies.

A human reviewer can assess a handful of suspicious accounts, but they cannot reliably connect tiny signals scattered across millions of transactions.

Operational constraints make things worse. Fraud teams are typically understaffed and reactive, triaging alerts rather than preventing systemic leakage.

Rules-based systems generate high false-positive rates, forcing teams to waste time on innocent customers, or tune thresholds so conservatively that clever abusers slip through. Siloed data, marketing, payments, CRM, and gaming logs kept in separate systems, further prevent the holistic view needed to spot cross-account patterns.

Crucially, fraudsters iterate faster than most. They adapt tactics, probe weak spots and test new automation techniques. By the time a pattern is recognised and a rule deployed, the abuse has already cost real money.

For finance leaders, that translates into hidden revenue loss, inflated acquisition costs and a steady erosion of marketing ROI.

Human judgement remains essential, but as a partner to machine intelligence, not the sole line of defence.

How AI bonus abuse detection works

Detecting bonus abuse today is less about flagging individual suspicious accounts and more about connecting signals scattered across thousands of players, brands, and transactions. That is a data problem no human team can solve at speed. AI can.

Where rules-based systems wait for patterns to repeat, AI models learn continuously, spotting the coordinated ring of 200 accounts before the second withdrawal, not the hundredth. Where manual reviews catch last month’s tactics, adaptive models are already mapping next month’s.

Cross-account pattern recognition means that abuse which looks perfectly legitimate in isolation becomes visible the moment it is viewed at scale across devices, geographies, and timeframes.

The practical result for operators is straightforward. Promotional budgets reach real players. Acquisition costs reflect genuine growth. Fraud teams spend their time on strategy and complex investigations, not triaging thousands of low-confidence alerts generated by outdated rule sets. That is what it means to stop subsidising abusers.

That is precisely what Bonus Guardian is built for. Bonus Guardian is EveryMatrix’s AI-powered tool against bonus abusers, built inside EngageSuite for the current needs of iGaming operators.

It continuously monitors player behaviour across accounts, devices, and geographies, so when, for example, 50 accounts start exhibiting the same staggered deposit pattern and deliberate loss behaviour, Bonus Guardian flags the ring before any of them reach withdrawal, not after. The result is abuse caught at the point of exploitation, not discovered in a post-campaign audit.

Stay ahead of bonus abusers or risk?

Bonus abuse is no longer a minor operational nuisance. It is a material threat to revenue and marketing ROI. Operators that rely solely on manual reviews or static rules risk leaving significant amounts of promotional spend unprotected, as sophisticated abusers operate in ways that mimic genuine players and evade traditional detection methods.

Forward-looking operators are already recognising that human-only detection is nearing obsolescence. By integrating AI-driven tools like Bonus Guardian, they can identify subtle patterns, block abuse in real time, and protect their margins without overburdening fraud teams.

Ensuring bonuses reach genuine players is not just about preventing loss, it is about maximising the return on marketing investments and maintaining competitive advantage.

The operators winning this battle have stopped treating bonus abuse prevention as a cost centre and started treating it as a revenue protection function. They are not asking whether AI is worth the investment. They are asking how quickly they can deploy it, because every week of delay is another week of promotional budget funding someone else’s operation. Bonus Guardian was built for exactly this moment.

See Bonus Guardian’s full capabilities here .

Ready to start a conversation?

The key for us as a true B2B iGaming software provider is to help gaming operators implement bold ideas and unleash their creativity. Everything is possible.

Talk to an expert